Talent · 4 MIN READ · YANEK KORFF · DEC 5, 2017 · TAGS: Employee retention / Great place to work / Management / Metrics

Constant learning feeds the heart and soul of a security analyst. But constant learning requires a constant stream of “interesting stuff” to analyze. Stuff like attacks, compromises, malware, new methodologies, and so on. And that stuff is the exact opposite of what most businesses want: a boring security report and the satisfaction that comes with checking the box and saying “Yup, we reviewed all of our security incidents and addressed everything we found.” But sometimes, news like this is anything but rosy.

Consider you’re a security analyst at your “average” security operations center (SOC). You work a pretty long shift: 11 or 12 hours. Fortunately, the work is super dynamic and extra interesting. There are alerts… lots of them. But they’re mostly the same. You don’t need to learn much about them, because the event IDs tell you which plays you have to follow in your run book. Most of them end with “reimage the machine.” After a couple of months, you’ve got all the plays memorized.

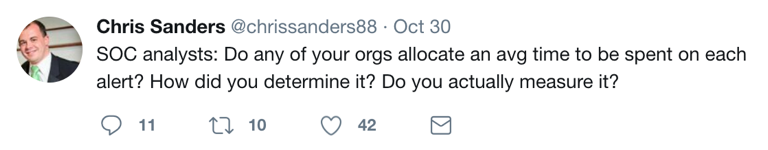

This is good. After all, you’ve got a 35-second target to triage each alert before you’re supposed to either (a) close it out as a false positive; (b) escalate it as a true positive; or (c) call a senior analyst because you can’t decide between A and B. You spend the rest of your time figuring out the call tree so you know whom you have to notify since your published SLAs only give you 10 minutes to make the call. Tick tock.

Every so often, you see something interesting and set it aside for later. Maybe after your shift, or when you need a mental break from the monotony, you can explore that pcap or dig into that piece of malware you found. Gotta make sure you don’t get caught by management, though. The last time your shift manager found out you’d reported MORE than what the customer expected, you got in trouble. “We could end up in a lot of hot water if customers expected that higher service level all the time.” That’s a good point.

Eventually the number of new things you find trickles down to nothing and you tap out. After all, you’ve been there forever… like 12 months… or maybe through sheer force of will, you’ve made it 14.

Speed at the price of quality or completeness

Perhaps you’re reading this and thinking, “This is ridiculous. You can’t be expected to do effective analysis in 35 seconds, no matter how good your tooling.”

I agree! By optimizing for speed you drive up volume and drive down quality.

So, before you go putting Ferrari engines in fire trucks, let’s briefly examine the siren call of time. As it turns out, in most SOCs, time is the input metric, not the output metric. In other words, we can control how long we spend on stuff… so we do, above all else.

Getting your input and output metrics mixed up can result in some wacky decisions. For example, it’s easy to focus on specific process improvements in a vacuum without considering the overall “system.” In the example above, narrowly focusing on triage time can ultimately increase the overall time spent responding to security incidents because of missing alerts and misdiagnosis, or even a steady increase in the volume of incoming alerts.

Rising from the fire

If you’ve read The Phoenix Project, you’re familiar with “The Three Ways.” The book spins a painful parable of Bill the IT Manager learning how to bring order to chaos with the help of a mysterious Lean-minded guru named Erik. Turns out, Erik has a mental model for creating order using three principles. I think this is as relevant in a SOC as it is in DevOps. Take a look.

Way #1 — Flow.

It’s our obligation when building and operating a system (in this case, security operations) to understand how work moves through our process “left to right” and not let “local optimization cause global degradation.” What does this mean? In a SOC, it means you don’t drive down tier-1 work into monotonous button-pushing that increases errors in the system overall. It means don’t be the “average SOC” (see above). Instead, make sure you’ve got the tooling in place to optimize specific parts of the analysis and investigation process and that you can measure effects across the entire system.

Way #2 — Feedback.

Information has to flow backwards through an otherwise one-way process, so you can solve defects earlier in the process. In other words, don’t dam upstream.

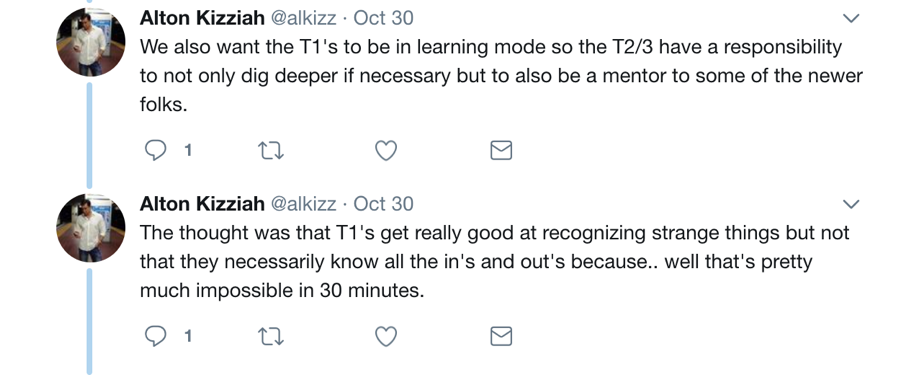

Way #3 – Experimentation and Learning.

You knew it was coming. In security operations, this means you have to take risks, try new things, be transparent about failure and learn from your mistakes. There’s no perfect process and no perfect system. Any system that can’t adapt and evolve will eventually fail.

This is doubly true for the people who live in the system. If the environment doesn’t support experimentation, accept failure and encourage learning and growth… they’ll leave. And losing them costs you — not only the time and money required to find and train new fearless candidates, but also the investment you’ve made in them to date that walks out the door when they leave.

So now what?

In my opinion, the most impactful change you can make to create a learning environment is to flip your time-based metrics. Make them output metrics instead of input metrics. How? Eliminate any specific time targets (or objectives) for analysts and give them freedom to exercise their own judgement about how deeply (or how long) to investigate alerts.

Then, monitor how much time each step in your process takes… and solve for time by tweaking the tools and automation you’re using. Also, make sure feedback loops are built into the process to address issues “upstream.”

Meanwhile, while you’re doing all of this, take another look at your hiring practices and make sure your hiring approach supports the new expectations you’ve put in place.

Over time, you’ll find you’ve grown a substantially more capable team that’s not only happier, but also delivering results far beyond what you’re seeing today.

—

This is the final part of a five part series on key areas of focus to improve security team retention. Read from the beginning, 5 ways to keep your security nerds happy.